Improving Validation Rates on Your LabVIEW Projects

(Circle of LabVIEW: Part 6 of 6)

This is Circle of LabVIEW: Validation, the final blog post that describes different stages of the LabVIEW project life cycle from idea to market. Although reading them in order will help understanding the bigger picture, each blog post is self-contained with a small section regarding the "RT Installer," a kind of sample product used in this series.

Validation, in contrast to verification, is the process of determining whether the stakeholder goals have been met in part or in whole. Although with LabVIEW projects, it’s common that stakeholder goals and functional requirements are the same, some projects - especially products and services - use the requirements to an end.

Validation for a project or product is specific to the stakeholders of said project. Stakeholders generally have financial and/or functional investment in the product or project. They benefit from its acceptance, use, or adoption in the target market. Again, for LabVIEW projects, the stakeholder is often the end-user, subject matter expert, and stakeholder. Their validation is VERY close to the verification of functionality:

I need the system to perform this recipe automatically after I configure it to increase the efficiency of my project.

By successfully verifying functionality of the application, it’s safe to say that as a stakeholder, it has also passed validation because their process is more efficient.

Another example of a stakeholder goal is to increase process efficiency by 20%. Through testing and information gathering, they found that the average time to perform this process is about 10 minutes. Upon completion of the project and functional verification, they performed those same tests and found that the process now takes 6 minutes, which is a 40% increase in efficiency. Thus, the project has been successfully validated from the perspective of its stakeholder goal.

Validation and verification, although connected, are NOT the same thing. Validation is from the perspective of the stakeholders, while verification is often from the perspective of the developer (Did we reach “OUR” goal vs. Does it work as expected). Validation is often outside the scope of what Erdos Miller can provide, but we’re happy to give support, bug fixes, and/or feature adds to the end of achieving stakeholder validation.

The "RT Installer" Stakeholder goals were to create an application that was as efficient or more efficient than using NI Measurement and Automation Explorer, the RAD Utility, or the LabVIEW Development environment for deployment with the added ability to work with multiple targets. We put together the following tests to validate our application:

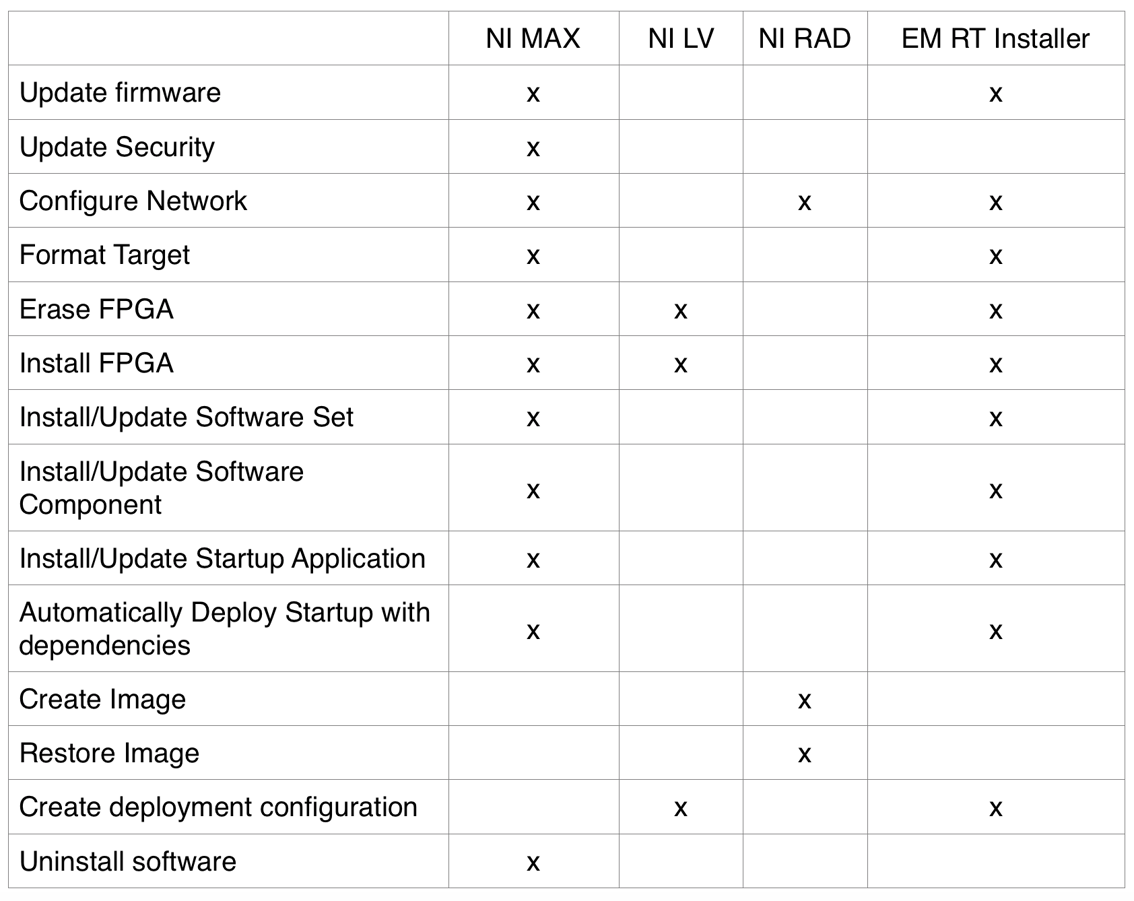

We can qualify our application by comparing it to alternative ways to perform the same functions. See the table below comparing common functions.

By the above, the RT Installer covers 71% of functionality provided by NI MAX/LabVIEW/RAD, covers 81% of functionality provided by NI MAX, 100% of functionality provided by LabVIEW, and 33% of the functionality is provided by the RAD Utility. This kind of validation is subjective, as it’s limited by the functionality provided by existing products where the RT Installer provides functions that aren’t provided by anyone else.

We can quantify our application by asking the question: “Is the RT Installer more efficient than NI MAX?” This can be answered by comparing manual software installation and FPGA bitfile installation.

See below the two videos performing the exact same process (the video is running at 4x):

Video of manual installation using MAX (above).

Video of manual installation using RT Installer (above).

What we found was that manual installation using NI MAX and LabVIEW (common deployment method) takes about 7 minutes from completion of formatting the target to successful deployment, while the RT Installer performed a manual installation in 11 minutes. This means that manual deployment in the RT Installer takes 50% longer than manual deployment using MAX.

Although validation has seemed to pass qualitatively but failed quantitatively, these validation tests are as apples to apples as possible while the additional functionality provided by RT Installer isn’t being tested. In practice, the way in which the "RT Installer" is used may be more efficient than other existing methods.

GET A FREE TRIAL OF the RT Installer TODAY!